By Andrew Wilson, Editor, [email protected]

There are a number of ways to increase the processing speed of image-processing software. These include partitioning the code among multiple dual and quad-core processors, using FPGAs to offload point and neighborhood operations, or using specialized dedicated processors such as JPEG compression engines. While each of these options presents its own price/performance trade-offs, another option-offloading some of these functions to the graphical processing unit (GPU) of the graphics display card-is being implemented by Stemmer Imaging (Puchheim, Germany; www.stemmer-imaging.de) in its latest release of its Common Vision Blox (CVB) software.

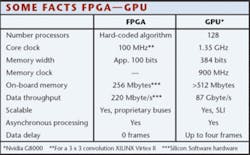

“From the outset,” says Martin Kersting, technical director at Stemmer Imaging, “we realized that specific architectures such as multiprocessors, FPGAs, and add-in graphics cards can be used to increase the speed of commonly used image-processing functions in different ways.” When performance capabilities of both FPGAs and today’s fastest GPUs are compared, however, it is obvious that even with a maximum four-frame data delay, the increase in speed attainable is impressive (see Fig. 1).

Figure 1. When performance capabilities of FPGAs and fast GPUs are compared, it is obvious that even with a maximum four-frame data delay, the increase in speed attainable is impressive.

“Indeed,” says Kersting, “various benchmarks produced by a number of publications indicate a performance increase of between two and ten times when using GPUs rather than CPUs for functions such as convolution, rotation, and motion estimation.” Primarily designed for games applications, graphics boards from vendors such as ATI Technologies (Markham, ON, Canada; www.ati.amd.com) and Nvidia (Santa Clara, CA, USA; www.nvidia.com) incorporate multiple processors that, in the past, were divided into custom vertex shaders and pixel-processing units. In operation, the vertex shader takes the attributes of vertices such as the points of a triangle and transforms them into a partial image that possesses color, texture, position, and direction.

Because many operations are required to render a lifelike image, multiple shaders are incorporated on each graphics engine. Using these vertex shaders, the rendered object can be transformed in 3-D space. After such transformation, pixel shaders shade individual pixels within the image.

“Until now, pixel shaders and vertex shaders have remained separate entities,” says Kersting. “However, with the introduction of processors such as the GeForce 8800 from Nvidia, the 128, 128-bit floating point processors of the 681 million transistor processor, can be dynamically allocated to geometry, vertex, physics, or pixel shading operations.”

Indeed, with the introduction of its DirectX 10 graphics API, Microsoft allows processors also to be defined as geometry shaders that take input from vertex shaders and create geometric objects or scene elements that are then rendered by the pixel shaders. In a graphics application, such a shader could create displacement mapping providing rendered surfaces a great sense of depth and detail. While much has been written about this processor, perhaps one of the most definitive articles can be found at www.xbitlabs.com/articles/video/display/gf8800.html, where Alexey Stepin, Yaroslav Lyssenko, and Anton Shilov review both the architecture of the processor and the graphics cards that are now available.

“The architecture of each graphics device that supports DirectX is complex,” says Kersting. However, both the DirectX API and Microsoft High Level Shader Language compiler let developers of image-processing code move images to and from a camera interface such as GigE, move the image to and from host CPU and graphics memory, and allow high-level programming of the shading engines.

“Of course,” says Kersting, “to use these processors, images have to be transferred to and from the VGA card, resulting in a delay between image capture and execution of image-processing code on the graphics processor.” However, using the devices does allow for extremely high-throughput applications that require no specialized hardware.

To make the applications transparent to the developer of machine-vision applications, Stemmer Imaging has developed a dedicated toolset of its CVB software. Kersting and his colleagues have added more than 40 functions to the software package that are callable from within CVB applications. At present, these functions include image convolution, point operations between two images, processing four monochrome images parallel, RGB to HSI conversion, Bayer to RGB conversion, flat-field correction, image rotation and rescaling, and comparing host CPU and GPU processing.

Figure 2. Stemmer Imaging has demonstrated a PC-based system using a Nvidia 8800 graphics card. The processor performed a 3 × 3 Sobel filter on the image at 30 frames/s.

Recently, Stemmer Imaging demonstrated a PC-based system using a Nvidia 8800 graphics card. As well as displaying captured images from a CCIR A11 monochrome camera resized to 2k × 2k pixels on the PC monitor, the graphics processor simultaneously performed a 3 × 3 Sobel filter on the image at 30 frames/s. “In benchmarks performed on a 2.4-GHz Intel Core 2 duo and the Nvidia 8800 both performing a 5 × 5 filter, the Nvidia 8800 performed approximately five times faster (see Fig. 2).

While such functions use the pixel and geometry shading features of the graphics processor, no vertex shader functions have currently been implemented. “In the future, however,” says Kersting, “we expect to implement lens-distortion correction and warping algorithms using these functions.” Although this innovative technology is accessible through CVB, it is crucial to evaluate specific requirements case by case for maximum efficiency.